|

Lyndon Lam I am currently a postbaccalaureate researcher in ML Foundations at the Kempner Institute. Previously, I graduated from Cal Poly Pomona. |

This is me. |

News

|

ResearchI am interested in multimodal datasets and models. I am particularly interested in cross-modal transfer learning, where training a model on one modality (e.g., text-only QA) can improve performance on another modality (e.g., visual QA). Outside of multimodal learning but in a similar vein, I am also interested in cross-lingual transfer learning, such as training multilingual LLMs on English-only mathematical reasoning data and observing gains on Chinese math reasoning benchmarks. Broadly, I like training and advancing multimodal models, using methods that are efficient in both training and data. |

|

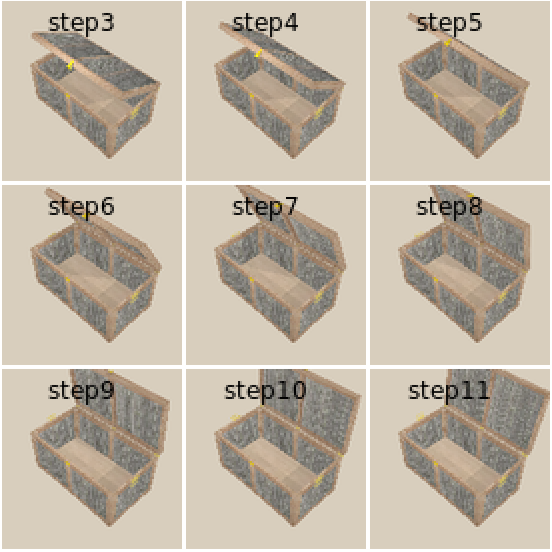

Skill Generalization With Verbs

Rachel Ma, Lyndon Lam, Benjamin A. Spiegel, Aditya Ganeshan, Roma Patel, Ben Abbatematteo, David Paulius, Stefanie Tellex, George Konidaris IROS, 2023 project page / pdf Proposed a two-part model consisting of a classifier and an optimizer to generalize manipulation skills to novel object categories using verbs. |

|

|